This is a continuation of an article that details exactly how my predictions and rankings are derived. You can read part 1 here. To recap, I'm using the Super Bowl match-up between the Steelers and Cardinals as an example. So far, we've used a logistic regression model based on team efficiency stats to estimate the probability each team will win.

This is a continuation of an article that details exactly how my predictions and rankings are derived. You can read part 1 here. To recap, I'm using the Super Bowl match-up between the Steelers and Cardinals as an example. So far, we've used a logistic regression model based on team efficiency stats to estimate the probability each team will win.

We haven't accounted for strength of schedule yet. For example, the Steelers may have the NFL's best run defense, yielding only 3.3 yds per rush. But is that because they're good or because their opponents happened to have poor running games?

To adjust for opponent strength, we'll first need to calculate each team’s generic win probability (GWP), or the probability of winning a game against a notional league-average opponent at a neutral site. This would give us a good estimate of a team’s expected winning percentage based on their stats.

Since we already know each team’s logit components, all we need to know is the NFL-average logit. If we take the average efficiency stats and apply the model coefficients we get Logit (Avg) = -2.52.

Therefore, for the Cardinals, a game against a notional average opponent would look like:

Logit = Logit (ARI) – Logit (Avg)

= 0.07

The odds ratio is e

0.07 = 1.09. Arizona’s GWP is 0.52—just barely above average. If we do the same thing for Pittsburgh, we get a GWP of 0.73. And it’s easy enough to do for all 32 teams. In fact, that’s what we need to do for our next step in the process, which is to adjust for average opponent strength.

The GWPs I calculated for Arizona and Pittsburgh were based on raw efficiency stats, unadjusted for opponent strength. That’s ok if we assume they had roughly the same strength of schedule. But often teams don’t, especially in the earlier weeks of the season.

To adjust for opponent strength, I could adjust each team efficiency stat according to the average opponents’ corresponding stat. In other words, I could adjust the Cardinals’ passing efficiency according to their opponents’ average defensive efficiency. I’d have to do that for all the stats in the model, which would be insanely complex. But I have a simpler method that produces the same results.

For each team, I average its to-date opponents’ GWP to measure strength of schedule. This season Arizona’s average opponent GWP was 0.51—essentially average. I can compute the average logit of Arizona’s opponents by reversing the process I’ve used so far.

The odds ratio for the Cardinals’ average opponent is 0.51/(1-0.51) = 1.03. The log of the odds ratio, or logit, is log(1.03) = 0.034. I can add that adjustment into the logit equation we used to get their original GWP.

Logit = Logit(ARI) – Logit(Avg) + 0.034

= 0.11

This makes the odds ratio e

0.11 = 1.12. Their GWP now becomes 0.53. If you think about it intuitively, this makes sense. Their unadjusted GWP was 0.51. They (apparently) had a slightly tougher schedule than average. So their true, underlying team strength should be slightly higher than we originally estimated.

I said ‘apparently’ because now that we’ve adjusted each teams GWP, that makes each team’s average opponent GWP different. So we have to repeat the process of averaging each team’s opponent GWP and redoing the logistic adjustment. I iterate this (usually 4 or 5 times) until the adjusted GWPs converge. In other words, they stop changing because each successive adjustment gets smaller as it zeroes in on the true value.

Ultimately, Arizona’s opponent GWP is 0.50 and Pittsburgh’s is 0.53. After a full season of 16 games, strength of schedule tends to even out. But earlier in the season one team might have faced a schedule averaging 0.65 while another may have faced one averaging 0.35.

My hunch is that it’s this opponent adjustment technique that gives this model its accuracy. It’s easy enough to look at a team’s record or stats to intuitively assess how good it is, but it’s far more difficult to get a good grasp of how inflated or deflated its reputation may be due to the aggregate strength or weakness of its opponents.

Now that we’ve determined opponent adjustments, we can apply them to the game probability calculations. The full logit now becomes:

Logit = const + home field + (Team A logit + Team A Opp logit) –

(Team B logit + Team B Opp logit)

Pittsburgh’s opponent logit is log(0.53/(1-0.53)) = 0.10 and Arizona’s is log(0.50/1-.50) = 0.01. The game logit including opponent adjustments is now:

Logit = -0.36 + 0.72/2 + (-2.45 + 0.01) - (-1.51 + 0.10)

= -1.02

The odds ratio is therefore e

-1.02, which makes the probability of Arizona winning 0.36. This estimate, based on opponent adjustments, is slightly lower than what we got for the unadjusted estimate. This makes sense because Arizona’s strength of schedule was basically average, and Pittsburgh’s was slightly tougher than average.

So there you have it, a complete estimate of Super Bowl XLIII probabilities and a step-by-step method of how I do it.

There are all kinds of variations to play around with. You can choose which weeks of stats to use, to overweight, or to ignore. You can calculate a team’s offensive GWP by holding its own defensive stats average in the calculations, and only adjusting for opponent defensive stats. The resulting OGWP tells us how a team would do on just the strength of its offense alone. It’s the generic win probability assuming the team had a league-average defense. DGWP is vice versa.

One variation I employ is to counter early-season overconfidence by adding a number of dummy weeks of league-average data to each team's stats. This regresses each team's stats to the league mean, which reduces the tendency for team stats to be extreme due to small sample size. For example, it takes about 6 weeks for a team's offensive run efficiency to stabilize near its ultimate season-long average. So at week 3, I'll add 3 games worth of purely average performance into each team's running efficiency stat. No team will sustain either 7.5 yds per rush or 2.2 yds per rush.

This entire process might seem ridiculously convoluted, but it’s actually pretty simple. You get the coefficients from the regression. You next calculate each team’s logit with simple arithmetic. Game probabilities and “GWP” are just a logarithm away. Opponent adjustments require a little more effort, but in the end, you just add them into the logit equation.

Voila--a completely objective, highly accurate NFL game prediction and team ranking system.

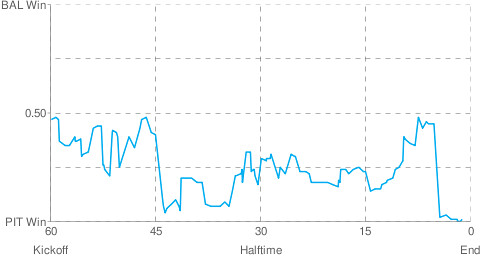

Win probabilities for Super Bowl XLIII are listed below. More info after the jump.

Win probabilities for Super Bowl XLIII are listed below. More info after the jump. PIT at ARI

PIT at ARI

ARI

ARI PIT

PIT

BAL

BAL PHI

PHI

PHI vs BAL

PHI vs BAL

CAR

CAR NYG

NYG TEN

TEN SD

SD